Site Web gratuit & Serveur Migration

Déployez des GPU NVIDIA H100 hautes performances

Serveurs GPU NVIDIA H100 ultra-puissants pour la formation en IA, grands modèles de langage, apprentissage profond, et calcul haute performance avec cloud évolutif ou infrastructure GPU dédiée.

- Optimisé pour l'IA & Charges de travail LLM

- Infrastructure GPU hautes performances

- Déploiement cloud évolutif

- Performances de calcul de niveau entreprise

À partir de

3,30 € par GPU / heure

Le bonheur de nos clients

Colonel est noté sur Google Avis

Colonel est noté sur Capterra

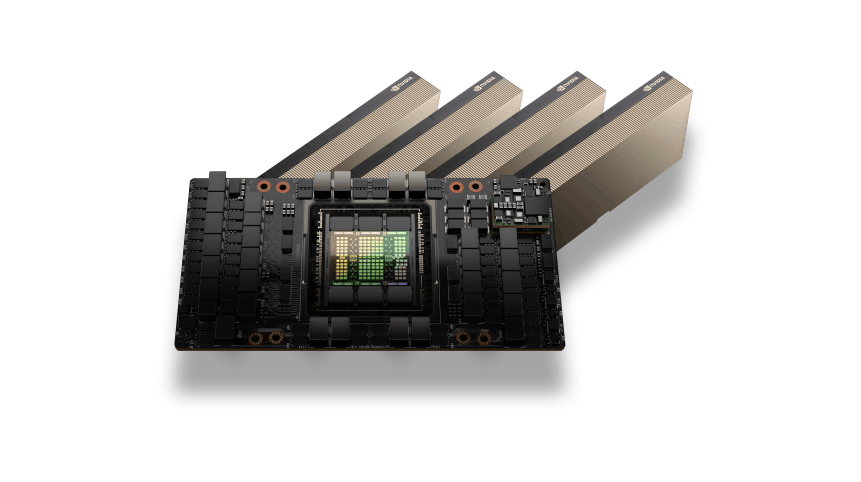

Architecture GPU NVIDIA H100

Le GPU NVIDIA H100 est construit sur l'architecture Hopper et est conçu pour offrir des performances extrêmes pour l'infrastructure d'IA moderne.. Avec des cœurs Tensor avancés et une mémoire à large bande passante, Les GPU H100 accélèrent les charges de travail de deep learning à grande échelle et les pipelines de formation complexes en IA.

Cette architecture permet une formation de modèle plus rapide, efficacité améliorée pour les modèles de transformateurs, et des performances optimisées pour les grands modèles de langage et les applications d'IA générative.

IA et performances de calcul à grande échelle

Les GPU NVIDIA H100 offrent des performances de calcul exceptionnelles pour les charges de travail d'IA les plus exigeantes. De la formation de réseaux neuronaux massifs à l'exécution d'inférences en temps réel pour de grands modèles de langage, Les GPU H100 permettent un traitement efficace de grands ensembles de données et de tâches complexes d'apprentissage automatique.

Qu'il soit déployé pour la recherche en IA, infrastructure d'IA d'entreprise, ou environnements HPC à grande échelle, Les GPU H100 offrent des performances fiables et une puissance de calcul évolutive.

Cas d'utilisation des GPU NVIDIA H100

Formation sur un grand modèle de langage

Former des modèles de transformateurs avancés et des systèmes d'IA générative à grande échelle.

Inférence IA à grande échelle

Exécutez des pipelines d'inférence hautes performances pour les chatbots, AI assistants, et candidatures LLM.

Calcul haute performance

Accélérer la recherche scientifique, simulations d'ingénierie, et charges de travail informatiques complexes.

Recherche et développement en IA

Développer des architectures d’IA de nouvelle génération et des modèles expérimentaux d’apprentissage automatique.

Traitement et analyse des données

Traitez des ensembles de données massifs pour les pipelines d'apprentissage automatique et les charges de travail d'analyse d'entreprise.

Tarification flexible du GPU H100

GPU H100

Calcul GPU NVIDIA H100 de niveau entreprise conçu pour la formation en IA, grands modèles de langage, et charges de travail de calcul haute performance.$3.30 par GPU / heure

Meilleur prixTop en vedette

Accélération du GPU NVIDIA H100 Tensor Core

80Go de mémoire GPU HBM3 à large bande passante

Facturation GPU horaire avec paiement à l'utilisation

Stockage NVMe hautes performances

Réseau ultra-rapide 100 Gbit/s

Idéal pour la formation de grands modèles d'IA

Infrastructure cloud GPU évolutive

Déployez des instances GPU en quelques minutes

Optimisé pour les charges de travail LLM et IA générative

Infrastructure GPU d'entreprise

Besoin d'une capacité GPU à grande échelle pour les clusters de formation d'IA ou les charges de travail d'entreprise?$ Tarification personnalisée

Pour les déploiements multi-GPU et les clusters dédiésTop en vedette

Clusters multi-GPU H00

Serveurs GPU dédiés

Processeur personnalisé, BÉLIER, et configurations de stockage

Infrastructure réseau GPU à haut débit

Conçu pour la formation en IA et les charges de travail HPC

Performances et fiabilité de niveau entreprise

Environnements de calcul IA évolutifs

Support technique prioritaire

Fonctionnalités d'entreprise des serveurs GPU NVIDIA H100

Architecture GPU de trémie

Construit sur l'architecture NVIDIA Hopper optimisée pour l'infrastructure d'IA moderne et l'informatique avancée.

Mémoire GPU à large bande passante

Mémoire GPU hautes performances conçue pour traiter efficacement de grands ensembles de données et des modèles d'IA complexes.

Optimisé pour les charges de travail LLM

Idéal pour les grands modèles linguistiques, architectures de transformateurs, et charges de travail d'IA générative.

Infrastructure GPU haute performance

Les serveurs GPU fonctionnent sur une infrastructure à haut débit avec un stockage NVMe et une mise en réseau rapide.

Environnements multi-GPU évolutifs

Passez des déploiements à GPU unique à de grands clusters multi-GPU pour les charges de travail d'IA d'entreprise.

Cloud flexible ou déploiement dédié

Déployez les GPU H100 en tant qu'instances cloud ou serveurs GPU dédiés en fonction des besoins de votre infrastructure.

Besoin d'aide pour choisir la bonne infrastructure GPU?

Questions fréquemment posées sur le serveur GPU

Trouvez des réponses aux questions courantes sur les serveurs GPU NVIDIA H100, options de déploiement, prix, et capacités de charge de travail de l'IA.

Chat en direct

24/7/365 Grâce au Chat Widget, important si vous exécutez.

L'hébergement GPU NVIDIA H100 fournit une infrastructure d'IA hautes performances alimentée par l'architecture NVIDIA Hopper. Il est conçu pour l’apprentissage automatique avancé, grands modèles de langage, formation en apprentissage profond, et des charges de travail de calcul haute performance qui nécessitent une accélération GPU massive.

Oui. Le GPU NVIDIA H100 est l'un des GPU les plus puissants disponibles pour la formation en IA. Il est largement utilisé pour former de grands modèles de langage, systèmes d'IA générative, et des réseaux d'apprentissage profond complexes qui nécessitent des performances de calcul élevées et une bande passante mémoire rapide.

Le GPU NVIDIA H100 comprend généralement 80Go de mémoire HBM3, offrant une bande passante et une capacité extrêmement élevées. Cela lui permet de gérer de très grands ensembles de données et des modèles d'IA utilisés dans le deep learning., calcul scientifique, et traitement avancé des données.

Les serveurs GPU NVIDIA H100 sont couramment utilisés pour la formation de modèles d'IA, grands modèles de langage, IA générative, analyse de données, simulations scientifiques, et des charges de travail de calcul haute performance qui nécessitent un traitement parallèle massif.

Oui. Les serveurs GPU NVIDIA H100 prennent entièrement en charge les frameworks d'IA modernes tels que PyTorch, TensorFlow, Applications CUDA, et d'autres outils accélérés par GPU utilisés pour la formation et le déploiement de modèles d'IA.

Colonelserver fournit une infrastructure GPU puissante avec un réseau haute performance et des ressources de calcul évolutives. Les serveurs GPU NVIDIA H100 sont conçus pour les ingénieurs IA, scientifiques des données, et les organisations exécutant des charges de travail exigeantes en matière d'IA et d'apprentissage automatique.