Site Web gratuit & Serveur Migration

Déployez des GPU NVIDIA A40 hautes performances

Exécutez de puissants serveurs GPU NVIDIA A40 pour la formation en IA, rendu des charges de travail, apprentissage automatique, et calcul haute performance avec cloud évolutif ou infrastructure GPU dédiée.

- Optimisé pour l'IA & Charges de travail LLM

- Infrastructure GPU hautes performances

- Déploiement cloud évolutif

- Performances de calcul de niveau entreprise

À partir de

1,10 € par GPU / heure

Le bonheur de nos clients

Colonel est noté sur Google Avis

Colonel est noté sur Capterra

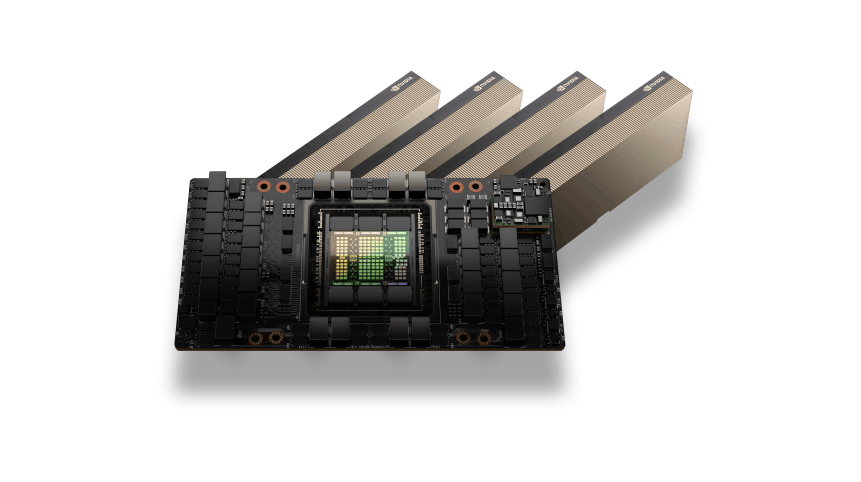

Architecture GPU NVIDIA A40

Le GPU NVIDIA A40 est construit sur l'architecture Ampere et est conçu pour accélérer les charges de travail d'IA modernes, rendu graphique, et applications de centres de données. Avec de puissants cœurs Tensor et une grande capacité de mémoire GPU, Les GPU A40 offrent des performances fiables pour la formation en machine learning, inférence, et charges de travail de visualisation avancées.

Ce GPU est largement utilisé dans les centres de données pour prendre en charge le deep learning, postes de travail virtuels, and large-scale data processing tasks while maintaining high efficiency and stability.

IA, Rendering and HPC Performance

NVIDIA A40 GPUs provide strong acceleration for AI workloads, deep learning pipelines, et environnements informatiques hautes performances. With high memory capacity and optimized GPU architecture, the A40 enables efficient processing of complex machine learning models and large datasets.

It is also highly effective for GPU rendering, simulation environments, and professional visualization workloads, making it suitable for enterprise AI infrastructure and creative industries.

NVIDIA A40 GPUs Use Cases

Formation sur les modèles d'IA

Train machine learning and deep learning models using the powerful compute capabilities of NVIDIA A40 GPUs.

GPU Rendering Workloads

Accelerate 3D rendering, animation production, et traitement des effets visuels.

Postes de travail virtuels

Déployez des bureaux virtuels et des postes de travail distants alimentés par GPU pour la conception, ingénierie, et visualisation.

Science des données et analyse

Traitez efficacement de grands ensembles de données et des pipelines d’apprentissage automatique.

Simulations scientifiques et techniques

Exécuter des simulations, tâches de modélisation, et charges de travail informatiques complexes.

Tarification flexible du GPU A40

GPU A40

Calcul GPU flexible à la demande conçu pour la formation en IA, rendu des charges de travail, et applications hautes performances.$1.10 par GPU / heure

Meilleur prixTop en vedette

Accélération du GPU NVIDIA A40

48Go de mémoire GPU GDDR6

Facturation GPU horaire avec paiement à l'utilisation

Stockage NVMe hautes performances

Mise en réseau rapide de 10 à 100 Gbit/s

Idéal pour la formation en IA et les charges de travail de rendu

Infrastructure cloud GPU évolutive

Déployez des instances GPU en quelques minutes

Optimisé pour les pipelines d'apprentissage automatique

Infrastructure GPU d'entreprise

Besoin d'une capacité GPU à grande échelle pour les clusters de formation d'IA ou les charges de travail d'entreprise?$ Tarification personnalisée

Pour les déploiements multi-GPU et les clusters dédiésTop en vedette

Clusters multi-GPU

Serveurs GPU dédiés

Processeur personnalisé, BÉLIER, et configurations de stockage

Infrastructure réseau GPU à haut débit

Conçu pour la formation en IA et les charges de travail HPC

Performances et fiabilité de niveau entreprise

Environnements de calcul IA évolutifs

Support technique prioritaire

Fonctionnalités d'entreprise des serveurs GPU NVIDIA A40

Accélération de l'architecture Ampère

Les GPU NVIDIA A40 sont basés sur l'architecture Ampere conçue pour les charges de travail d'IA et d'informatique professionnelle..

Grande capacité de mémoire GPU

La mémoire GPU haute capacité permet un traitement efficace de modèles d'IA complexes et de grands ensembles de données.

Optimisé pour l'IA et la visualisation

Idéal pour les charges de travail d'apprentissage profond, applications de science des données, et tâches de rendu professionnel.

Infrastructure GPU haute vitesse

Les serveurs GPU fonctionnent sur une infrastructure hautes performances avec un stockage NVMe et une mise en réseau rapide.

Déploiement GPU évolutif

Faites évoluer votre environnement de calcul d'une seule instance GPU vers de grands clusters GPU.

Cloud flexible ou déploiement dédié

Choisissez entre des instances GPU cloud ou des serveurs GPU dédiés en fonction des exigences de charge de travail.

Besoin d'aide pour choisir la bonne infrastructure GPU?

Questions fréquemment posées sur le serveur GPU

Trouvez des réponses aux questions courantes sur les serveurs GPU NVIDIA A40, options de déploiement, prix, et capacités de charge de travail de l'IA.

Chat en direct

24/7/365 Grâce au Chat Widget, important si vous exécutez.

L'hébergement GPU NVIDIA A40 est conçu pour l'inférence IA, développement de l'apprentissage automatique, calcul haute performance, et charges de travail GPU professionnelles. Il est couramment utilisé par les développeurs et les data scientists qui ont besoin d'une accélération GPU fiable pour les modèles de formation., informatique, et tâches de calcul avancées.

Oui. Le GPU NVIDIA A40 est bien adapté aux pipelines d'apprentissage automatique et aux charges de travail d'inférence d'IA. Sa grande capacité VRAM et ses fortes performances de traitement parallèle le rendent efficace pour exécuter des modèles entraînés dans des environnements de production et gérer de grands ensembles de données..

Le GPU NVIDIA A40 comprend 48Go de mémoire GDDR6, lui permettant d'exécuter de grands modèles d'IA, cadres d'apprentissage profond, et les charges de travail de calcul haute performance qui nécessitent une capacité de mémoire GPU importante.

Oui. NVIDIA A40 GPU servers fully support modern AI and machine learning frameworks including PyTorch, TensorFlow, Applications CUDA, and other GPU-accelerated software tools used for AI development and model deployment.

NVIDIA A40 GPU servers are commonly used for AI inference, machine learning experiments, deep learning development, modèles de vision par ordinateur, analyse de données, and GPU-accelerated rendering workloads.

Colonelserver provides reliable GPU hosting infrastructure with high-performance networking, scalable compute resources, and stable GPU environments designed for AI developers, scientifiques des données, and businesses running GPU-accelerated applications.