Kostenlose Website & Server Migration

Deploy high-performance NVIDIA B200 GPUs

Run next-generation NVIDIA B200 GPU servers for massive AI training, generative AI workloads, und Hochleistungsrechnen mit skalierbarer Cloud- oder dedizierter GPU-Infrastruktur.

- Optimiert für KI & LLM-Workloads

- Hochleistungs-GPU-Infrastruktur

- Skalierbare Cloud-Bereitstellung

- Rechenleistung der Enterprise-Klasse

Beginnend bei

€5.2 per GPU / Stunde

Unsere Kundenzufriedenheit

Oberst bewertet wird auf Google Review

Oberst bewertet wird auf Capterra

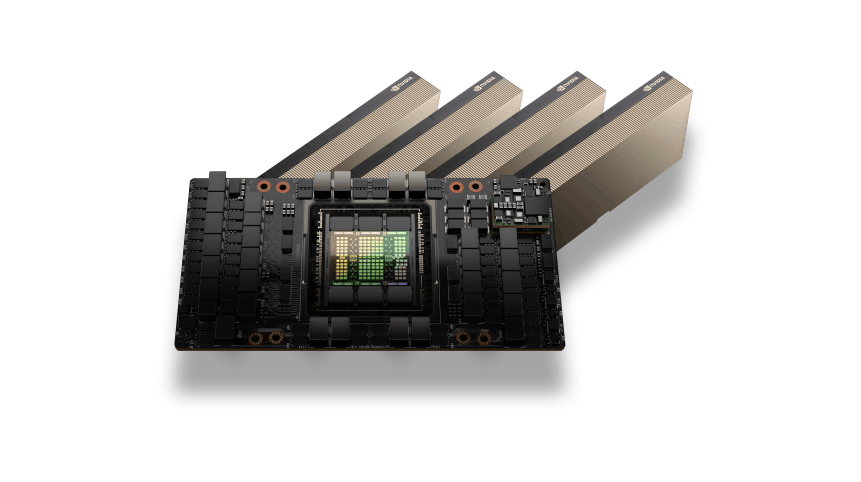

NVIDIA B200 GPU Architecture

The NVIDIA B200 GPU is built on the latest Blackwell architecture and delivers exceptional compute power for modern AI infrastructure. With ultra-high memory bandwidth and advanced Tensor Cores, B200 GPUs are designed to accelerate large-scale AI models, Deep-Learning-Training, and data-intensive workloads.

This architecture enables faster model training, improved efficiency, and optimized performance for generative AI platforms, large language models, and enterprise AI deployments.

AI and HPC Performance

NVIDIA B200 GPUs are optimized for the most demanding AI and high-performance computing workloads. From training large language models to running complex data pipelines and simulations, B200 GPUs provide massive parallel compute power and scalable infrastructure.

Whether used for AI research, enterprise AI platforms, or advanced machine learning pipelines, B200 GPUs deliver high throughput, geringe Latenz, and efficient performance for modern compute environments.

NVIDIA B200 GPUs Use Cases

Large Language Model Training

Train next-generation transformer models and large language models with powerful GPU acceleration and massive compute capacity.

Generative AI Platforms

Run generative AI applications such as image generation, Video-KI, and AI assistants with high-performance GPU infrastructure.

AI Research and Development

Develop and test advanced AI architectures, deep learning models, and experimental machine learning systems.

Scientific Computing

Accelerate complex scientific simulations, engineering workloads, and large-scale data modeling tasks.

High Performance Data Processing

Process massive datasets and machine learning pipelines for enterprise analytics and AI applications.

Flexible B200 GPU Pricing

B200 GPU

Next-generation NVIDIA B200 GPU compute built for large-scale AI training, generative AI workloads, and high-performance computing environments.$5.2 pro GPU / Stunde

€0.09 per 10GBTop vorgestellt

NVIDIA B200 Blackwell architecture acceleration

Up to 192GB HBM3e ultra-high bandwidth GPU memory

Stündliche Pay-as-you-go-GPU-Abrechnung

Hochleistungs-NVMe-Speicher

Ultra-fast 100Gbps networking

Designed for large AI model training

Scalable multi-GPU AI infrastructure

Deploy GPU servers within minutes

Optimized for generative AI and LLM workloads

GPU-Infrastruktur für Unternehmen

Benötigen Sie große GPU-Kapazität für KI-Trainingscluster oder Unternehmens-Workloads?$ Individuelle Preise

Für Multi-GPU-Bereitstellungen und dedizierte ClusterTop vorgestellt

Multi-GPU-Cluster

Dedizierte GPU-Server

Benutzerdefinierte CPU, RAM, und Speicherkonfigurationen

Hochgeschwindigkeits-GPU-Netzwerkinfrastruktur

Entwickelt für KI-Schulungen und HPC-Workloads

Leistung und Zuverlässigkeit auf Unternehmensniveau

Skalierbare KI-Rechenumgebungen

Vorrangiger technischer Support

Enterprise Features of NVIDIA B200 GPU Servers

Blackwell GPU Architecture

Next-generation GPU architecture optimized for large-scale AI infrastructure and advanced compute workloads.

Massive GPU Memory Bandwidth

High-bandwidth memory designed for processing large datasets and training complex AI models efficiently.

Optimized for Generative AI

Ideal for training and running modern generative AI models, including LLMs and transformer-based architectures.

Hochgeschwindigkeits-GPU-Infrastruktur

GPU-Server, die auf einer Hochleistungsinfrastruktur mit NVMe-Speicher und schnellem Netzwerk bereitgestellt werden.

Scalable Multi-GPU Environments

Easily scale from single GPU instances to large multi-GPU clusters for enterprise AI workloads.

Flexible Cloud- oder dedizierte Bereitstellung

Deploy B200 GPUs as cloud GPU instances or dedicated GPU servers depending on your infrastructure needs.

Benötigen Sie Hilfe bei der Auswahl der richtigen GPU-Infrastruktur??

Häufig gestellte Fragen zum GPU-Server

Find answers to common questions about NVIDIA B200 GPU servers, Bereitstellungsoptionen, Preisgestaltung, und KI-Workload-Funktionen.

Live-Chat

24/7/365 Durch das Chat-Widget wichtig, wenn Sie laufen.

NVIDIA B200 GPU hosting provides high-performance compute infrastructure powered by the NVIDIA Blackwell architecture. It is designed for advanced AI training, large-scale inference, deep learning models, and enterprise AI workloads that require massive parallel processing power.

The NVIDIA B200 GPU is built for next-generation AI computing. It is commonly used for training large language models, generative AI systems, deep learning research, Wissenschaftliche Simulationen, and large-scale data processing environments.

Ja. NVIDIA B200 is specifically optimized for training extremely large AI models and foundation models. Its advanced architecture and high memory bandwidth allow faster training cycles for transformer models, LLMs, and other demanding machine learning workloads.

NVIDIA B200 GPU hosting offers exceptional compute performance, faster AI model training, high scalability, and enterprise-grade reliability. It is an ideal solution for organizations that require powerful GPU infrastructure for artificial intelligence and data science workloads.

NVIDIA B200 GPU servers are ideal for AI startups, research labs, machine learning engineers, large enterprises, and technology companies building advanced AI systems such as generative models, recommendation engines, and large-scale analytics platforms.

Ja. NVIDIA B200 GPU hosting supports modern AI frameworks such as PyTorch, TensorFlow, CUDA-based applications, and other GPU-accelerated machine learning tools, allowing developers to train and deploy AI models efficiently.

Ja. NVIDIA B200 GPUs are designed to accelerate generative AI and large language models. They are widely used for training and running LLMs, Chatbots, multimodal AI systems, and other advanced AI applications.

Dedicated NVIDIA B200 hosting provides exclusive GPU access, vorhersehbare Leistung, full control over the software environment, and improved stability for long-running AI training tasks and production workloads.

Compared to CPU-only servers, NVIDIA B200 GPUs provide dramatically faster processing for AI workloads and parallel computing tasks. This significantly reduces training times and improves performance for deep learning and data-intensive applications.

Colonelserver provides high-performance GPU infrastructure, reliable network connectivity, and scalable GPU hosting solutions designed for AI developers, data scientists, and enterprises that require powerful and stable GPU compute resources.