Kostenlose Website & Server Migration

Stellen Sie leistungsstarke NVIDIA L40S-GPUs bereit

Führen Sie leistungsstarke NVIDIA L40S-GPU-Server aus, die für KI-Inferenz konzipiert sind, generative KI, Rendering von Arbeitslasten, und Hochleistungsrechnen mit skalierbarer Cloud- oder dedizierter GPU-Infrastruktur.

- Optimiert für KI & LLM-Workloads

- Hochleistungs-GPU-Infrastruktur

- Skalierbare Cloud-Bereitstellung

- Rechenleistung der Enterprise-Klasse

Beginnend bei

1,50 € pro GPU / Stunde

Unsere Kundenzufriedenheit

Oberst bewertet wird auf Google Review

Oberst bewertet wird auf Capterra

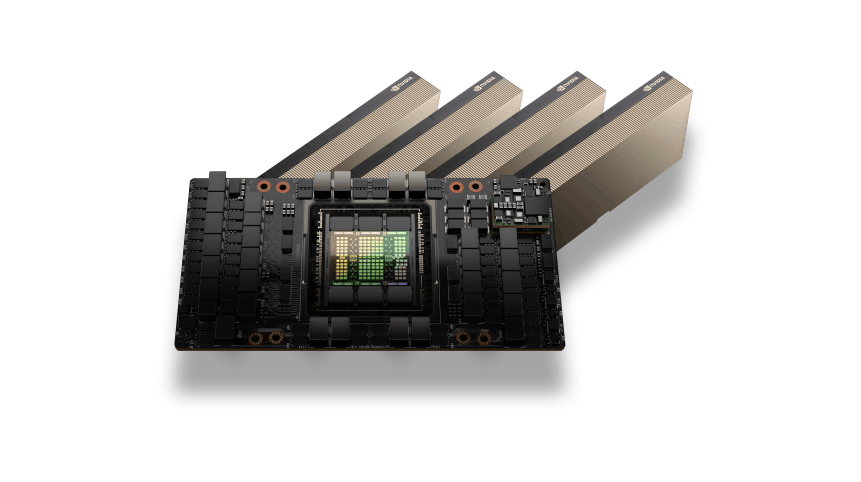

NVIDIA L40S GPU-Architektur

Die NVIDIA L40S GPU basiert auf der Ada Lovelace-Architektur und ist darauf ausgelegt, moderne KI-Inferenz-Workloads zu beschleunigen, generative KI-Anwendungen, und grafikintensive Rechenaufgaben. Mit leistungsstarken Tensor-Kernen und großer GPU-Speicherkapazität, L40S-GPUs liefern starke Leistung für maschinelles Lernen, KI-Entwicklung, und erweiterte Visualisierungs-Workloads.

Seine Architektur ermöglicht eine effiziente Parallelverarbeitung für KI-Inferenzpipelines und GPU-beschleunigte Anwendungen, Dies macht es zu einer idealen Lösung für die moderne Rechenzentrumsinfrastruktur.

KI-Inferenz und Grafikleistung

NVIDIA L40S-GPUs sind für leistungsstarke KI-Inferenz optimiert, generative KI-Plattformen, und professionelle Visualisierungs-Workloads. Sie bieten eine leistungsstarke Beschleunigung für Pipelines für maschinelles Lernen, Rendering-Aufgaben, und GPU-basierte Anwendungen.

Von der Ausführung von KI-Assistenten und LLM-Inferenz bis hin zur Verarbeitung komplexer Grafik-Workloads und Simulationen, L40S-GPUs bieten zuverlässige Leistung und skalierbare Infrastruktur für anspruchsvolle Rechenumgebungen.

Anwendungsfälle für NVIDIA L40S-GPUs

KI-Inferenz-Workloads

Führen Sie große Sprachmodelle und KI-Inferenzpipelines effizient mit GPU-Beschleunigung aus.

Generative KI-Anwendungen

Power-Image-Generierung, Video-KI, und moderne generative KI-Plattformen.

GPU-Rendering

Beschleunigen Sie das 3D-Rendering, Animationsproduktion, und visuelle Effektverarbeitung.

Entwicklung des maschinellen Lernens

Trainieren und testen Sie Modelle für maschinelles Lernen in GPU-beschleunigten Umgebungen.

Datenverarbeitung und Analyse

Verarbeiten Sie große Datensätze und Pipelines für maschinelles Lernen für KI-Workloads in Unternehmen.

Flexible L40S-GPU-Preise

L40S-GPU

Leistungsstarke NVIDIA L40S-GPU-Rechner für KI-Inferenz, generative KI-Anwendungen, und GPU-Rendering-Workloads.$1.50 pro GPU / Stunde

Bester PreisTop vorgestellt

NVIDIA L40S GPU-Beschleunigung

48GB GDDR6 GPU-Speicher

Stündliche Pay-as-you-go-GPU-Abrechnung

Hochleistungs-NVMe-Speicher

Schnelles Netzwerk mit 10–100 Gbit/s

Ideal für KI-Inferenz-Workloads

Skalierbare GPU-Cloud-Infrastruktur

Stellen Sie GPU-Instanzen innerhalb von Minuten bereit

Optimiert für generative KI und Rendering-Workloads

GPU-Infrastruktur für Unternehmen

Benötigen Sie große GPU-Kapazität für KI-Trainingscluster oder Unternehmens-Workloads?$ Individuelle Preise

Für Multi-GPU-Bereitstellungen und dedizierte ClusterTop vorgestellt

Multi-GPU-Cluster

Dedizierte GPU-Server

Benutzerdefinierte CPU, RAM, und Speicherkonfigurationen

Hochgeschwindigkeits-GPU-Netzwerkinfrastruktur

Entwickelt für KI-Schulungen und HPC-Workloads

Leistung und Zuverlässigkeit auf Unternehmensniveau

Skalierbare KI-Rechenumgebungen

Vorrangiger technischer Support

Unternehmensfunktionen von NVIDIA L40S GPU-Servern

Ada Lovelace GPU-Architektur

Basierend auf der NVIDIA Ada Lovelace-Architektur, optimiert für KI-Inferenz und Grafik-Workloads.

GPU-Speicher mit hoher Kapazität

Großer GPU-Speicher für die Verarbeitung komplexer KI-Modelle und großer Datensätze.

Optimiert für KI und Rendering

Ideal für generative KI, LLM-Schlussfolgerung, und professionelle GPU-Rendering-Workloads.

Hochgeschwindigkeits-GPU-Infrastruktur

GPU-Server, die auf einer Hochleistungsinfrastruktur mit NVMe-Speicher und schnellem Netzwerk bereitgestellt werden.

Skalierbare GPU-Umgebungen

Skalieren Sie von einzelnen GPU-Instanzen bis hin zu größeren Rechenumgebungen mit mehreren GPUs.

Flexible Cloud- oder dedizierte Bereitstellung

Stellen Sie L40S-GPUs entsprechend Ihren Infrastrukturanforderungen als Cloud-GPU-Instanzen oder dedizierte GPU-Server bereit.

Benötigen Sie Hilfe bei der Auswahl der richtigen GPU-Infrastruktur??

Häufig gestellte Fragen zum GPU-Server

Finden Sie Antworten auf häufig gestellte Fragen zu NVIDIA L40S GPU-Servern, Bereitstellungsoptionen, Preisgestaltung, und KI-Workload-Funktionen.

Live-Chat

24/7/365 Durch das Chat-Widget wichtig, wenn Sie laufen.

NVIDIA L40S GPU-Hosting bietet eine leistungsstarke GPU-Infrastruktur, die für KI-Inferenz konzipiert ist, Arbeitslasten für maschinelles Lernen, Echtzeit-Rendering, und Datenverarbeitung. Die L40S-GPU basiert auf der Ada Lovelace-Architektur und liefert starke Leistung sowohl für KI- als auch für grafikbeschleunigte Arbeitslasten.

Die NVIDIA L40S-GPU wird häufig für KI-Inferenz verwendet, generative KI-Anwendungen, Computer-Vision-Modelle, Echtzeit-Rendering, und GPU-beschleunigte Datenverarbeitung. Es wird häufig in Rechenzentren für Arbeitslasten eingesetzt, die eine starke KI-Leistung und hohe Effizienz erfordern.

Die NVIDIA L40S GPU beinhaltet 48GB GDDR6-Speicher, Dies ermöglicht die Bewältigung anspruchsvoller Arbeitslasten wie KI-Inferenzpipelines, Experimente zum maschinellen Lernen, Rendering-Aufgaben, und GPU-beschleunigte Anwendungen.

Ja. NVIDIA L40S-GPUs sind in hohem Maße für KI-Inferenz-Workloads und generative KI-Anwendungen optimiert. Sie liefern eine hervorragende Leistung für die Ausführung trainierter KI-Modelle, Empfehlungssysteme, und Echtzeit-KI-Dienste.

Ja. NVIDIA L40S GPU-Server unterstützen gängige KI-Frameworks einschließlich PyTorch vollständig, TensorFlow, CUDA-Anwendungen, und andere GPU-beschleunigte Entwicklungstools, die für maschinelles Lernen und KI-Bereitstellung verwendet werden.

Colonelserver bietet eine zuverlässige GPU-Hosting-Infrastruktur mit leistungsstarkem Netzwerk und skalierbaren Rechenressourcen. NVIDIA L40S GPU-Server sind für die Unterstützung von KI-Workloads konzipiert, Entwicklung maschinellen Lernens, und leistungsstarke GPU-Anwendungen.