Deploy high-performance NVIDIA L40S GPUs

Run powerful NVIDIA L40S GPU servers designed for AI inference, generative AI, rendering workloads, and high-performance computing with scalable cloud or dedicated GPU infrastructure.

- Geoptimaliseerd voor AI & LLM-werklasten

- High-performance GPU infrastructure

- Scalable cloud deployment

- Enterprise-grade compute performance

Beginnend om

€1.50 per GPU / uur

Ons klantengeluk

Kolonel wordt beoordeeld op Google Review

Kolonel wordt beoordeeld op Capterra

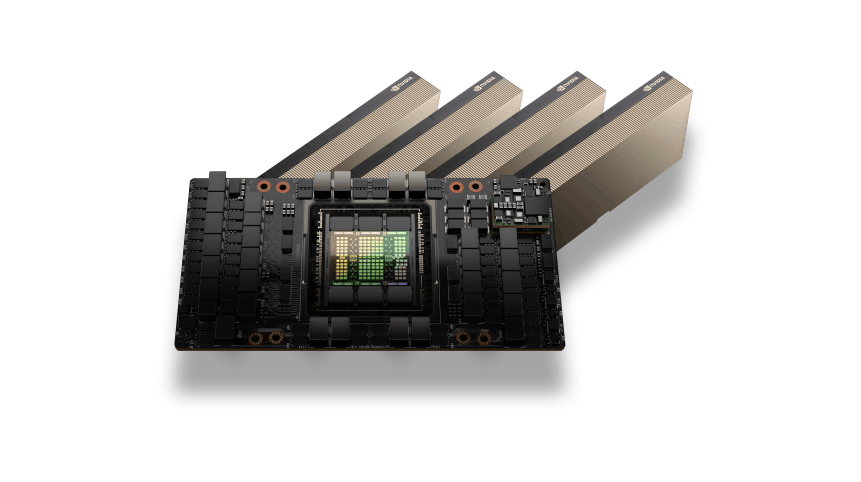

NVIDIA L40S GPU Architecture

The NVIDIA L40S GPU is built on the Ada Lovelace architecture and is designed to accelerate modern AI inference workloads, generative AI applications, and graphics-intensive computing tasks. With powerful Tensor Cores and large GPU memory capacity, L40S GPUs deliver strong performance for machine learning, AI development, and advanced visualization workloads.

Its architecture enables efficient parallel processing for AI inference pipelines and GPU-accelerated applications, making it an ideal solution for modern data center infrastructure.

AI Inference and Graphics Performance

NVIDIA L40S GPUs are optimized for high-performance AI inference, generative AI platforms, and professional visualization workloads. They provide powerful acceleration for machine learning pipelines, taken weergeven, and GPU-powered applications.

From running AI assistants and LLM inference to processing complex graphics workloads and simulations, L40S GPUs deliver reliable performance and scalable infrastructure for demanding compute environments.

NVIDIA L40S GPUs Use Cases

AI Inference Workloads

Run large language models and AI inference pipelines efficiently with GPU acceleration.

Generative AI Applications

Power image generation, video AI, and modern generative AI platforms.

GPU Rendering

Accelerate 3D rendering, animation production, and visual effects processing.

Machine Learning Development

Train and test machine learning models in GPU-accelerated environments.

Data Processing and Analytics

Process large datasets and machine learning pipelines for enterprise AI workloads.

Flexible L40S GPU Pricing

L40S GPU

High-performance NVIDIA L40S GPU compute designed for AI inference, generative AI applications, and GPU rendering workloads.$1.50 per GPU / uur

Beste prijsTop aanbevolen

NVIDIA L40S GPU acceleration

48GB GDDR6 GPU memory

Hourly pay-as-you-go GPU billing

Krachtige NVMe-opslag

Snelle 10-100 Gbps-netwerken

Ideal for AI inference workloads

Schaalbare GPU-cloudinfrastructuur

Deploy GPU instances within minutes

Optimized for generative AI and rendering workloads

Enterprise GPU-infrastructuur

Er is behoefte aan grootschalige GPU-capaciteit voor AI-trainingsclusters of bedrijfsworkloads?$ Aangepaste prijzen

Voor implementaties met meerdere GPU's en speciale clustersTop aanbevolen

Multi-GPU clusters

Toegewijde GPU-servers

Aangepaste CPU, RAM, en opslagconfiguraties

Snelle GPU-netwerkinfrastructuur

Ontworpen voor AI-training en HPC-workloads

Prestaties en betrouwbaarheid op ondernemingsniveau

Schaalbare AI-computeromgevingen

Prioritaire technische ondersteuning

Enterprise Features of NVIDIA L40S GPU Servers

Ada Lovelace GPU Architecture

Built on NVIDIA Ada Lovelace architecture optimized for AI inference and graphics workloads.

High-Capacity GPU Memory

Large GPU memory designed to handle complex AI models and large datasets.

Optimized for AI and Rendering

Ideal for generative AI, LLM inference, and professional GPU rendering workloads.

High-Speed GPU Infrastructure

GPU servers deployed on high-performance infrastructure with NVMe storage and fast networking.

Scalable GPU Environments

Scale from single GPU instances to larger multi-GPU compute environments.

Flexible Cloud or Dedicated Deployment

Deploy L40S GPUs as cloud GPU instances or dedicated GPU servers based on your infrastructure needs.

Hulp nodig bij het kiezen van de juiste GPU-infrastructuur?

GPU Server Frequently Asked Questions

Find answers to common questions about NVIDIA L40S GPU servers, implementatie opties, prijzen, en AI-werklastmogelijkheden.

Livechat

24/7/365 Via de Chat Widget is het belangrijk als je rent.

NVIDIA L40S GPU hosting provides high-performance GPU infrastructure designed for AI inference, machine learning workloads, real-time rendering, and data processing. The L40S GPU is built on the Ada Lovelace architecture and delivers strong performance for both AI and graphics-accelerated workloads.

The NVIDIA L40S GPU is commonly used for AI inference, generative AI applications, computer vision models, real-time rendering, and GPU-accelerated data processing. It is widely deployed in data centers for workloads that require strong AI performance and high efficiency.

The NVIDIA L40S GPU includes 48GB of GDDR6 memory, allowing it to handle demanding workloads such as AI inference pipelines, machine learning experiments, taken weergeven, en GPU-versnelde toepassingen.

Ja. NVIDIA L40S GPUs are highly optimized for AI inference workloads and generative AI applications. They deliver excellent performance for running trained AI models, aanbeveling systemen, and real-time AI services.

Ja. NVIDIA L40S GPU servers fully support popular AI frameworks including PyTorch, TensorFlow, CUDA applications, and other GPU-accelerated development tools used for machine learning and AI deployment.

Colonelserver offers reliable GPU hosting infrastructure with powerful networking and scalable compute resources. NVIDIA L40S GPU servers are designed to support AI workloads, machine learning development, and high-performance GPU applications.