Kostenlose Website & Server Migration

Deploy high-performance NVIDIA H200 GPUs

Run demanding AI workloads on powerful NVIDIA H200 GPUs with fast deployment, high-performance infrastructure, and scalable cloud compute designed for modern machine learning applications.

- Optimiert für KI & LLM-Workloads

- Hochleistungs-GPU-Infrastruktur

- Skalierbare Cloud-Bereitstellung

- Rechenleistung der Enterprise-Klasse

Beginnend bei

€2.30 per GPU / Stunde

Unsere Kundenzufriedenheit

Oberst bewertet wird auf Google Review

Oberst bewertet wird auf Capterra

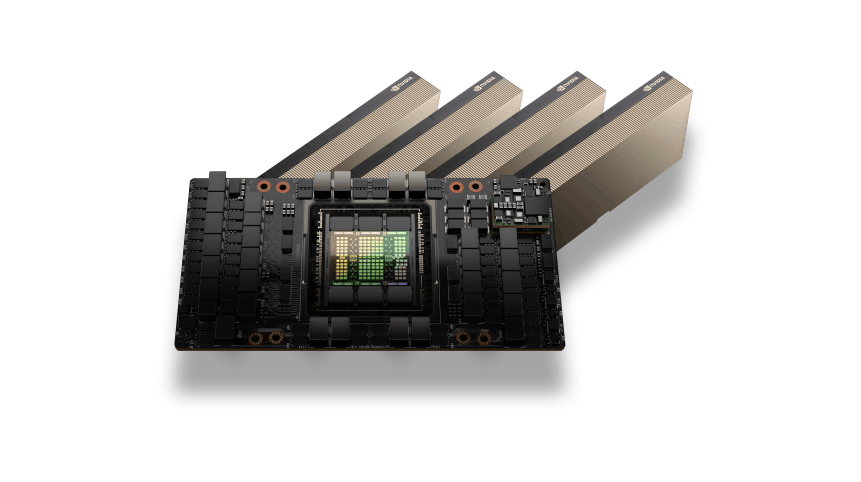

NVIDIA H200 GPU Architecture

The NVIDIA H200 GPU is built on the Hopper architecture and delivers exceptional performance for modern AI workloads. With massive HBM3e memory capacity and extremely high memory bandwidth, H200 GPUs are designed to handle large language models, Deep-Learning-Training, and high-performance computing tasks.

AI and HPC Performance

NVIDIA H200 GPUs are optimized for demanding AI and HPC environments where large datasets and complex computations require powerful acceleration.

Whether running AI training pipelines, LLM-Schlussfolgerung, or scientific simulations, H200 GPUs provide the compute performance needed to process large workloads efficiently while maintaining low latency and high scalability.

NVIDIA H200 GPUs Use Cases

KI-Modelltraining

Train large-scale machine learning and deep learning models using the massive compute power of NVIDIA H200 GPUs. Ideal for training transformer models, neural networks, und große Datensätze.

LLM Inference

Deploy and run large language models (LLMs) such as GPT-style models, Chatbots, and AI assistants with high-performance GPU inference.

High Performance Computing (HPC)

Accelerate scientific simulations, research workloads, and complex computational tasks that require massive parallel processing.

AI Data Processing

Process and analyze large datasets for AI pipelines, including preprocessing, feature extraction, and large-scale data analytics.

Rendering and Simulation

Run GPU-intensive workloads such as 3D rendering, Videoverarbeitung, and physics simulations that require powerful parallel GPU computing.

Flexible H200 GPU Pricing

H200-GPU

Flexible On-Demand-GPU-Berechnung für KI-Training, Inferenz-Workloads, und Hochleistungsanwendungen.$2.30 pro GPU / Stunde

Bester PreisTop vorgestellt

NVIDIA H200 GPU-Beschleunigung

141GB HBM3e GPU-Speicher

Stündliche Pay-as-you-go-Abrechnung

Hochleistungs-NVMe-Speicher

Schnelles Netzwerk mit 10–100 Gbit/s

Ideal für KI-Training und Inferenz

Skalierbare GPU-Cloud-Infrastruktur

Bereitstellung innerhalb weniger Minuten

Optimiert für LLM-Workloads

GPU-Infrastruktur für Unternehmen

Benötigen Sie große GPU-Kapazität für KI-Trainingscluster oder Unternehmens-Workloads?$ Individuelle Preise

Für Multi-GPU-Bereitstellungen und dedizierte ClusterTop vorgestellt

Multi-GPU-H200-Cluster

Dedizierte GPU-Server

Benutzerdefinierte CPU, RAM, und Speicherkonfigurationen

Hochgeschwindigkeits-GPU-Netzwerkinfrastruktur

Entwickelt für KI-Schulungen und HPC-Workloads

Leistung und Zuverlässigkeit auf Unternehmensniveau

Skalierbare KI-Rechenumgebungen

Vorrangiger technischer Support

Enterprise Features of NVIDIA H200 GPU Servers

Extreme AI Training Performance

Leverage the massive compute power of NVIDIA H200 GPUs to train large-scale AI models, deep neural networks, and complex machine learning workloads with exceptional speed and efficiency.

Large HBM3e GPU Memory

H200 GPUs provide high-capacity HBM3e memory designed for demanding AI workloads, large language models, and high-performance data processing pipelines.

Optimized for LLM Workloads

Run modern large language models and AI inference workloads efficiently with GPU architecture optimized for transformer models and generative AI applications.

Hochgeschwindigkeits-GPU-Infrastruktur

Our GPU servers are deployed on high-performance infrastructure with NVMe storage and fast networking, ensuring low latency and maximum compute performance.

Scalable GPU Deployment

Easily scale your compute environment from a single GPU instance to multi-GPU workloads depending on your AI training or inference requirements.

Flexible Cloud- oder dedizierte Bereitstellung

Choose between on-demand cloud GPU instances for flexible workloads or dedicated GPU servers for long-running AI training and enterprise deployments.

Benötigen Sie Hilfe bei der Auswahl der richtigen GPU-Infrastruktur??

Häufig gestellte Fragen zum GPU-Server

Find answers to common questions about NVIDIA H200 GPU servers, Bereitstellungsoptionen, Preisgestaltung, und KI-Workload-Funktionen.

Live-Chat

24/7/365 Durch das Chat-Widget wichtig, wenn Sie laufen.

Our Object Storage infrastructure is hosted in high-performance European data centers. This ensures low latency, strong data protection standards, and full compliance with GDPR regulations. Additional locations may be added in the future as the platform expands.

Uploading data to Object Storage (ingress traffic) is completely free. You can upload files, Backups, or application data without any transfer costs.

The included monthly quota also includes outgoing traffic. Additional outgoing traffic beyond the included amount is billed separately.

NEIN. The included storage and traffic quota applies to the total usage across all buckets in your account, not per bucket.

You can create multiple buckets and distribute your data across them while still using the same shared quota.

Storage usage is calculated using TB-hours (TB-h). This method measures both the amount of stored data and the duration it remains stored.

Our Object Storage service is fully S3-kompatibel, which means it works with a wide range of existing tools and SDKs.

You can manage buckets, upload files, and control permissions using tools such as:

-

AWS CLI

-

rclone

-

S3 compatible SDKs

-

Backup software supporting S3 APIs

This allows easy integration with existing workflows and applications.

Incoming traffic (Uploads) is free of charge.

Outgoing traffic beyond the included quota is billed at $1.20 per TB. This makes it suitable for backups, application storage, and scalable data workloads.

Object Storage is designed for scalable data workloads and is commonly used for:

-

Backup and disaster recovery

-

Media storage (Bilder, Videos, assets)

-

Static website files

-

Application data storage

-

Log and archive storage

It is ideal for handling large amounts of unstructured data.

You can create multiple buckets within your Object Storage account to organize your data. Buckets allow you to separate projects, Anwendungen, or environments while managing everything under the same storage quota.

Ja. Our Object Storage platform uses redundant storage infrastructure to protect your data against hardware failures. Data is stored across multiple storage nodes to ensure durability and high availability. This redundancy helps prevent data loss and keeps your files accessible even if a hardware component fails.

Ja. Our Object Storage is fully S3-kompatibel, meaning it supports the same API structure used by Amazon S3. This allows you to use existing tools and integrations such as AWS CLI, rclone, backup software, and S3 SDKs without modifying your workflow.