Deploy high-performance NVIDIA H200 GPUs

Run demanding AI workloads on powerful NVIDIA H200 GPUs with fast deployment, hoogwaardige infrastructuur, and scalable cloud compute designed for modern machine learning applications.

- Geoptimaliseerd voor AI & LLM-werklasten

- High-performance GPU infrastructure

- Scalable cloud deployment

- Enterprise-grade compute performance

Beginnend om

€2.30 per GPU / uur

Ons klantengeluk

Kolonel wordt beoordeeld op Google Review

Kolonel wordt beoordeeld op Capterra

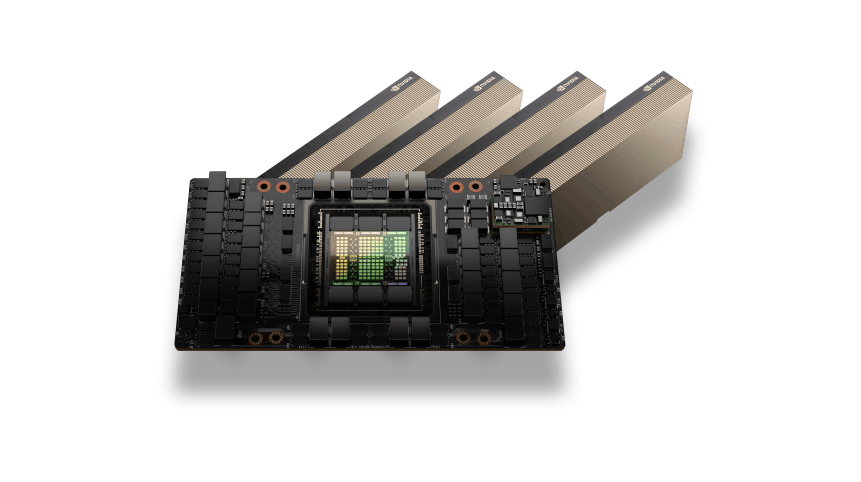

NVIDIA H200 GPU Architecture

The NVIDIA H200 GPU is built on the Hopper architecture and delivers exceptional performance for modern AI workloads. With massive HBM3e memory capacity and extremely high memory bandwidth, H200 GPUs are designed to handle large language models, diepgaande leeropleiding, and high-performance computing tasks.

AI and HPC Performance

NVIDIA H200 GPUs are optimized for demanding AI and HPC environments where large datasets and complex computations require powerful acceleration.

Whether running AI training pipelines, LLM inference, or scientific simulations, H200 GPUs provide the compute performance needed to process large workloads efficiently while maintaining low latency and high scalability.

NVIDIA H200 GPUs Use Cases

AI-modeltraining

Train large-scale machine learning and deep learning models using the massive compute power of NVIDIA H200 GPUs. Ideal for training transformer models, neural networks, en grote datasets.

LLM Inference

Deploy and run large language models (LLMs) such as GPT-style models, chatbots, and AI assistants with high-performance GPU inference.

High Performance Computing (HPC)

Accelerate scientific simulations, research workloads, and complex computational tasks that require massive parallel processing.

AI Data Processing

Process and analyze large datasets for AI pipelines, including preprocessing, feature extraction, and large-scale data analytics.

Rendering and Simulation

Run GPU-intensive workloads such as 3D rendering, videoverwerking, and physics simulations that require powerful parallel GPU computing.

Flexible H200 GPU Pricing

H200-GPU

Flexibele on-demand GPU-computing voor AI-training, werklasten afleiden, en krachtige toepassingen.$2.30 per GPU / uur

Beste prijsTop aanbevolen

NVIDIA H200 GPU-versnelling

141GB HBM3e GPU-geheugen

Facturering op uurbasis

Krachtige NVMe-opslag

Snelle 10-100 Gbps-netwerken

Ideaal voor AI-training en gevolgtrekking

Schaalbare GPU-cloudinfrastructuur

Implementeer binnen enkele minuten

Geoptimaliseerd voor LLM-workloads

Enterprise GPU-infrastructuur

Er is behoefte aan grootschalige GPU-capaciteit voor AI-trainingsclusters of bedrijfsworkloads?$ Aangepaste prijzen

Voor implementaties met meerdere GPU's en speciale clustersTop aanbevolen

H200-clusters met meerdere GPU's

Toegewijde GPU-servers

Aangepaste CPU, RAM, en opslagconfiguraties

Snelle GPU-netwerkinfrastructuur

Ontworpen voor AI-training en HPC-workloads

Prestaties en betrouwbaarheid op ondernemingsniveau

Schaalbare AI-computeromgevingen

Prioritaire technische ondersteuning

Enterprise Features of NVIDIA H200 GPU Servers

Extreme AI Training Performance

Leverage the massive compute power of NVIDIA H200 GPUs to train large-scale AI models, deep neural networks, and complex machine learning workloads with exceptional speed and efficiency.

Large HBM3e GPU Memory

H200 GPUs provide high-capacity HBM3e memory designed for demanding AI workloads, large language models, and high-performance data processing pipelines.

Optimized for LLM Workloads

Run modern large language models and AI inference workloads efficiently with GPU architecture optimized for transformer models and generative AI applications.

High-Speed GPU Infrastructure

Our GPU servers are deployed on high-performance infrastructure with NVMe storage and fast networking, ensuring low latency and maximum compute performance.

Scalable GPU Deployment

Easily scale your compute environment from a single GPU instance to multi-GPU workloads depending on your AI training or inference requirements.

Flexible Cloud or Dedicated Deployment

Choose between on-demand cloud GPU instances for flexible workloads or dedicated GPU servers for long-running AI training and enterprise deployments.

Hulp nodig bij het kiezen van de juiste GPU-infrastructuur?

GPU Server Frequently Asked Questions

Find answers to common questions about NVIDIA H200 GPU servers, implementatie opties, prijzen, en AI-werklastmogelijkheden.

Livechat

24/7/365 Via de Chat Widget is het belangrijk als je rent.

Onze Object Storage-infrastructuur wordt gehost in krachtige Europese datacenters. Dit zorgt voor een lage latentie, strenge normen voor gegevensbescherming, en volledige naleving van de AVG-regelgeving. Er kunnen in de toekomst extra locaties worden toegevoegd naarmate het platform zich uitbreidt.

Gegevens uploaden naar Object Storage (inkomend verkeer) is volledig gratis. U kunt bestanden uploaden, back-ups, of applicatiegegevens zonder overdrachtskosten.

Het inbegrepen maandelijkse quotum omvat ook uitgaand verkeer. Extra uitgaand verkeer boven het inbegrepen bedrag wordt afzonderlijk gefactureerd.

Nee. De inbegrepen opslag- en verkeersquota zijn van toepassing op de totale gebruik voor alle buckets in uw account, niet per emmer.

U kunt meerdere buckets maken en uw gegevens erover verdelen, terwijl u nog steeds hetzelfde gedeelde quotum gebruikt.

Het opslaggebruik wordt berekend met behulp van TB-uren (TB-h). Deze methode meet zowel de hoeveelheid opgeslagen gegevens als de duur dat deze opgeslagen blijven.

Onze Object Storage-service is volledig S3-compatibel, wat betekent dat het werkt met een breed scala aan bestaande tools en SDK's.

U kunt buckets beheren, bestanden uploaden, en beheer machtigingen met behulp van tools zoals:

-

AWS CLI

-

rcloon

-

S3-compatibele SDK's

-

Back-upsoftware die S3 API's ondersteunt

Dit maakt een eenvoudige integratie met bestaande workflows en applicaties mogelijk.

Inkomend verkeer (uploads) is gratis.

Uitgaand verkeer dat het opgenomen quotum overschrijdt, wordt gefactureerd $1.20 voor tuberculose. Dit maakt hem geschikt voor back-ups, applicatie opslag, en schaalbare dataworkloads.

Object Storage is ontworpen voor schaalbare gegevensworkloads en wordt vaak gebruikt voor:

-

Back-up en noodherstel

-

Media-opslag (afbeeldingen, video's, activa)

-

Statische websitebestanden

-

Opslag van applicatiegegevens

-

Log- en archiefopslag

Het is ideaal voor het verwerken van grote hoeveelheden ongestructureerde gegevens.

U kunt binnen uw Object Storage-account meerdere buckets maken om uw gegevens te ordenen. Met buckets kunt u projecten scheiden, toepassingen, of omgevingen terwijl u alles onder hetzelfde opslagquotum beheert.

Ja. Ons Object Storage-platform maakt gebruik van een redundante opslaginfrastructuur om uw gegevens te beschermen tegen hardwarestoringen. Gegevens worden opgeslagen op meerdere opslagknooppunten om duurzaamheid en hoge beschikbaarheid te garanderen. Deze redundantie helpt gegevensverlies te voorkomen en houdt uw bestanden toegankelijk, zelfs als een hardwarecomponent defect raakt.

Ja. Onze Objectopslag is volledig S3-compatibel, wat betekent dat het dezelfde API-structuur ondersteunt die wordt gebruikt door Amazon S3. Hierdoor kun je bestaande tools en integraties gebruiken zoals AWS CLI, rcloon, back-upsoftware, en S3 SDK's zonder uw workflow te wijzigen.